Automation introduces speed and scale, but it also introduces a new risk. Without clear measurement, teams cannot tell whether automation is strengthening quality or quietly adding instability. Release confidence, defect leakage, and delivery predictability all depend on knowing which signals actually reflect automation health.

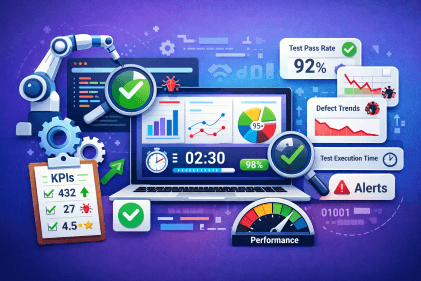

Defining the key metrics to track after automating your QA process make automation outcomes visible in practical terms, from reliability and execution speed to defect detection and long-term sustainability.

This article breaks down the specific metrics that indicate whether QA automation is improving delivery or creating hidden friction, and explains how to interpret them in a way that supports consistent, predictable releases.

What Changes After Automating QA

Automation changes not only how tests are executed, but how quality is evaluated. Feedback shifts from human review to continuous system signals, and decisions increasingly rely on dashboards, pipelines, and automated reports.

As test volume and execution frequency increase, teams often mistake activity for effectiveness. More tests, faster runs, and frequent failures do not automatically translate into better quality. Without the right KPIs, automation can obscure risk instead of reducing it.

This shift makes it necessary to measure outcomes rather than effort. Understanding what truly changes after automating QA clarifies which metrics reveal progress, which expose instability, and which signal that automation is not delivering its intended value.

Key Metrics to Track After QA Automation

The key metrics to track after automating your QA process help teams understand whether automation is improving product quality or simply increasing activity without meaningful outcomes. Each metric below explains what is being measured, why it matters, and how it connects to release confidence and delivery stability.

Test Coverage Percentage

Test coverage percentage measures how much of an application’s functionality is validated by automated tests. It indicates the extent to which system behavior is checked automatically whenever changes are introduced.

Coverage is assessed by mapping automated tests to functional areas and workflows within the application. Higher coverage means more parts of the system are exercised during test runs, reducing the likelihood that changes introduce unnoticed failures as the product evolves.

Execution Speed (Total Test Execution Time)

Execution speed measures how long all automated tests take to run from start to finish. This includes setup time, test execution, and result reporting.

Faster execution means teams receive feedback sooner after making a change. When tests run quickly, issues are discovered earlier in the development process, which makes fixes simpler and reduces delays in releasing new features.

Pass and Fail Rate Stability

Pass and fail rate stability measures how consistent the overall results of an automated test suite are across repeated runs. It shows whether the same set of tests produces similar outcomes when the application has not changed.

Stable pass and fail rates indicate that test results can be used as a reliable signal for release decisions. When results fluctuate significantly between runs, teams lose confidence in automation and may hesitate to act on test outcomes.

Defect Detection Rate

Defect detection rate measures how many problems are found by automation before the product reaches users. It compares defects found during testing with those discovered after release.

A higher early detection rate means automation is catching issues closer to when they are introduced. This lowers the cost of fixing problems and reduces the risk of defects affecting customers.

Defect Density

Defect density measures the number of confirmed defects relative to a defined unit such as a feature, module, or user story. It shows where quality issues are concentrated rather than when defects are found.

After QA automation, defect density helps teams understand whether automated coverage is actually improving stability or just increasing test activity. Persistent defect clusters in the same areas often indicate shallow test design, missing edge cases, or insufficient data variation.

A declining defect density across releases suggests automation is strengthening product quality. Stable or rising defect density signals that tests may exist but are not validating the right behaviours, even if overall coverage appears high.

Tracking defect density alongside coverage and defect detection rate keeps the focus on real quality outcomes rather than test volume.

Flakiness Rate

Flakiness rate measures how often individual automated tests produce inconsistent results. A test is considered flaky when it passes in one run and fails in another without any change to the application code.

Tracking flakiness rate helps teams identify unreliable tests that weaken automation trust. Reducing flakiness improves the accuracy of failure signals and prevents wasted effort investigating issues that are not real defects.

Mean Time to Detect (MTTD)

Mean Time to Detect measures how long it takes to notice a defect after it has been introduced. This metric focuses on the speed of feedback rather than the number of defects found. Short detection times allow teams to respond quickly and limit how far defects spread across builds or environments. Automation is expected to reduce this time significantly.

Mean Time to Fix (MTTF)

Mean Time to Fix measures how long it takes to resolve a defect once it has been detected. This includes understanding the issue, correcting it, and confirming the fix. Shorter fix times indicate that feedback is clear and actionable. This usually reflects good collaboration between QA and development teams.

Regression Execution Frequency

Regression execution frequency shows how often automated tests are run to ensure existing functionality still works after changes. Regression tests check that new updates do not break previously working features. Running regression tests frequently, such as daily or on every code change, reduces the risk of late-stage failures and supports more predictable releases.

Parallel Execution Efficiency

Parallel execution efficiency measures how much time is saved by running tests at the same time instead of one after another. This is done by splitting tests across multiple machines or environments. Efficient parallel execution keeps total test time manageable as test suites grow. Without it, execution time increases as more tests are added.

Maintenance Effort

Maintenance effort tracks how much time is spent updating and fixing automated tests each week. This includes adjusting tests for application changes, updating test data, and resolving failures caused by automation issues. High maintenance effort often means tests are tightly coupled to the system and break easily. Lower maintenance effort makes automation sustainable over the long term.

ROI of Automation

ROI of automation compares the effort saved through automation with the cost of creating and maintaining automated tests. It answers whether automation is providing real value to the organization. A positive ROI shows that automation reduces manual work and improves delivery efficiency rather than simply adding complexity or overhead.

Metrics That Indicate Automation Is Not Working Well

Poor signals often appear gradually and are easy to ignore without structured monitoring. These indicators help teams recognize when automation needs correction rather than further expansion.

High flakiness rates: Repeated failures that disappear on reruns force teams to manually verify results, reducing trust in automated outcomes despite increasing test volume.

Expanding test suites with weak coverage: Execution time grows while critical workflows remain insufficiently validated, leading to escaped defects despite more automated tests.

Slow execution speeds: Test runs take longer than development cycles, delaying feedback and limiting the ability to act on issues before release decisions are made.

Frequent CI failures: Pipelines fail due to unstable environments, brittle test dependencies, or inconsistent test data rather than actual product defects.

High maintenance overhead: A growing share of QA effort is spent fixing and updating tests instead of improving coverage, indicating fragile automation that does not scale well.

Recognizing these patterns early prevents automation from becoming a delivery constraint rather than a quality enabler.

How No Code Automation Improves These QA Metrics

No code automation affects QA metrics by simplifying how tests are created, maintained, and executed. This shifts automation outcomes toward greater stability and efficiency.

Faster test creation: Visual test building removes scripting overhead, allowing coverage to grow quickly without increasing complexity.

Lower flakiness: Structured execution reduces timing issues and fragile logic, leading to more consistent test results.

Reduced maintenance effort: Reusable steps limit the scope of updates when the application changes.

Faster execution: Built-in parallel runs shorten total test time and support frequent regressions.

Broader workflow coverage: Easier test creation enables validation of real user and business flows.

These effects improve the reliability of automation metrics and make performance trends easier to monitor over time.

How Sedstart Helps You Measure and Improve QA Metrics

Accurate QA metrics depend on consistent execution, clear visibility, and low noise in test results. Sedstart is a no-code automation platform with features that are designed to both generate reliable measurement data and actively improve the metrics teams track after automation.

Reusable automation blocks: Standardised components ensure the same steps are executed consistently across tests. This produces comparable execution data across runs, making maintenance effort and pass/fail rate stability measurable over time.

Parallel and concurrent execution: Sedstart records execution duration per run and per environment. This enables clear measurement of execution speed and parallel execution efficiency while also reducing total test time.

UI and API automation support: By logging results across interface and service layers, Sedstart makes test coverage percentage and defect detection rate visible across the full system, not just the UI.

Versioning and approval controls: Every test change is tracked and approved, creating a clear link between test updates and metric shifts. This allows teams to identify whether changes in stability or failure rates are caused by product changes or test changes.

CI and CD integrations: Automated pipeline runs generate timestamped results for every change. This data supports accurate measurement of mean time to detect defects and regression execution frequency.

These capabilities ensure QA metrics are both measurable and meaningful, allowing teams to track real trends rather than isolated test results.

Maintain Reliable QA Outcomes With Measurable Automation

Automation delivers lasting value only when performance is continuously visible and controlled. The key metrics to track after automating your QA process provide the structure needed to monitor progress, correct drift, and sustain reliable releases over time.

Sedstart supports this approach by aligning automation design with measurable, stable outcomes.